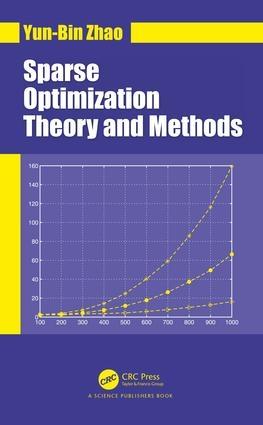

Seeking sparse solutions of underdetermined linear systems is required in many areas of engineering and science such as signal and image processing. The efficient sparse representation becomes central in various big or high-dimensional data processing, yielding fruitful theoretical and realistic results in these fields. The mathematical optimization plays a fundamentally important role in the development of these results and acts as the mainstream numerical algorithms for the sparsity-seeking problems arising from big-data processing, compressed sensing, statistical learning, computer vision, and so on. This has attracted the interest of many researchers at the interface of engineering, mathematics and computer science.

Sparse Optimization Theory and Methods presents the state of the art in theory and algorithms for signal recovery under the sparsity assumption. The up-to-date uniqueness conditions for the sparsest solution of underdertemined linear systems are described. The results for sparse signal recovery under the matrix property called range space property (RSP) are introduced, which is a deep and mild condition for the sparse signal to be recovered by convex optimization methods. This framework is generalized to 1-bit compressed sensing, leading to a novel sign recovery theory in this area. Two efficient sparsity-seeking algorithms, reweighted l1-minimization in primal space and the algorithm based on complementary slackness property, are presented. The theoretical efficiency of these algorithms is rigorously analysed in this book. Under the RSP assumption, the author also provides a novel and unified stability analysis for several popular optimization methods for sparse signal recovery, including l1-mininization, Dantzig selector and LASSO. This book incorporates recent development and the authors latest research in the field that have not appeared in other books.

Preface

Uniqueness of the Sparsest Solution of Linear Systems

Introduction

Spark

Uniqueness via Mutual Coherence

Improved Uniqueness Criteria via Coherence Rank

Babel Function and Sub-Babel Function

Notes

Uniqueness of Solutions to `1-Minimization Problems

Strict Complementary Slackness Property (SCSP)

Least `1-Norm Nonnegative Solution

Least `1-Norm Points in Polyhedra

Notes

Equivalence of `0- and `1-Minimization

Equivalence and Strong Equivalence

Standard `0- and `1-Minimization Problems

Problems with Nonnegativity Constraints

Application to Linear Programming

Equivalence of `0-Problem and Weighted `1-Problem

Sparse Vector Recovery

Sparse Nonnegative Vector Recovery

Notes

Bit Compressed Sensing

Introduction

Sign Measurements and Recovery Criteria

Relaxation Models

Consistency Condition

Reformulation of 1-Bit Compressed Sensing

Nonuniform Sign Recovery

Uniform Sign Recovery

Notes

Stability of Linear Sparse Optimization Methods

Introduction

Hoffmans Error Bound for Linear Systems

Weak RSP of Order k of AT

Stability of Standard `1-Minimization

Linear Dantzig Selector

Special Cases

Notes

Stability of Nonlinear Sparse Optimization Methods

Introduction

Orthogonal Projection Operator

Polytope Approximation of Unit Balls

A Necessary Condition for Stability

`1-Minimization with `2-Norm Constraints

Nonlinear Dantzig Selector

The LASSO Problem

Summary

Notes

Reweighted `1-Algorithms

Merit Function for Sparsity

Reweighted `1-Methods

Numerical Experiments

Theoretical Analysis

Notes

Sparsity via Dual Density

Introduction

`0-Minimization with Nonnegativity Constraints

DDRW for Standard `0-Minimization

Sparsity Enhancement for Weighted `1-Minimizers

Notes

References

(0 Comentarios)

(0 Comentarios)